Helping disaster response teams turn AI into action across Asia

STADLER reshapes knowledge work at a 230-year-old company

How AIRA2 breaks AI research bottlenecks

The promise of AI agents that can conduct genuine scientific research has long captivated the machine learning community, and, let’s be honest, slightly haunted it too.

A new system called AIRA2, developed by researchers at Meta’s FAIR lab and collaborating institutions, represents a significant leap forward in this quest…

The three walls holding back AI research (and the hidden bottlenecks within them)

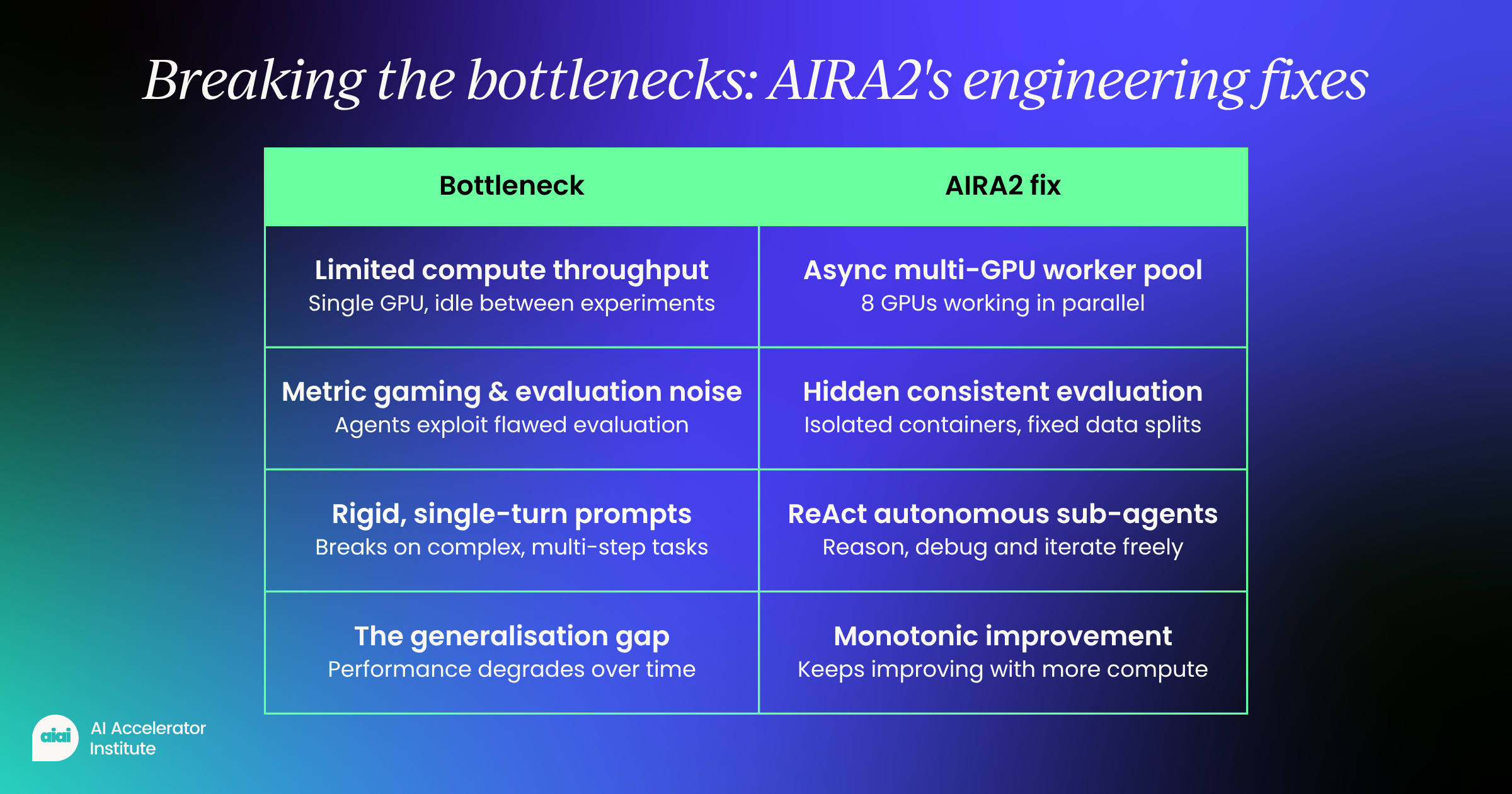

Previous attempts at building AI research agents keep hitting the same ceilings. The team behind AIRA2 identified key bottlenecks that limit progress, no matter how much compute is thrown at the problem.

- Limited compute throughput Most agents run synchronously on a single GPU, sitting idle while experiments complete. This drastically slows iteration and caps exploration.

- Too few experiments per day Because of this bottleneck, agents can only test ~10–20 candidates daily—far too low to meaningfully search a massive solution space.

- The generalization gap Instead of improving over time, agents often get worse, chasing short-term gains that don’t hold up.

- Metric gaming and evaluation noise Agents exploit flaws in their own evaluation, benefiting from lucky data splits or unnoticed bugs that distort results.

- Rigid, single-turn promptsPredefined actions like “write code” or “debug” break down in complex scenarios, leaving agents stuck when tasks become multi-step or unpredictable.

Engineering solutions for each bottleneck

AIRA2 addresses each bottleneck through specific architectural innovations.

To solve the compute problem, the system uses an asynchronous multi-GPU worker pool. Think of it as having eight hands instead of one; suddenly, multitasking becomes less of a fantasy.

While one worker trains a model on its dedicated GPU, the orchestrator dispatches new experiments to others, compressing days of sequential work into hours.

For the generalization gap, AIRA2 implements a Hidden Consistent Evaluation (HCE) protocol.

The system splits data into three sets:

- Training data the agent can see

- A hidden search set for evaluating candidates

- A validation set used only for final selection

To overcome static operator limitations, AIRA2 replaces fixed prompts with ReAct agents that can reason and act autonomously.

These sub-agents can:

- Perform exploratory data analysis

- Run quick experiments

- Inspect error logs

- Iteratively debug issues

Instead of failing when encountering an unexpected error, they can investigate, hypothesize, and try multiple fixes within the same session, more like a determined researcher, less like a script that gives up after one exception.

Proving the approach works

The researchers evaluated AIRA2 on MLE-bench-30, a collection of 30 Kaggle machine learning competitions ranging from computer vision to natural language processing.

More impressively, it continued improving to 76.0% at 72 hours, while previous systems typically degraded with extended runtime, like marathon runners who forgot to train.

The ablation studies revealed crucial insights

Removing the parallel compute capability dropped performance by over 12 percentile points at 72 hours.

Without the hidden evaluation protocol, performance plateaued after 24 hours and showed no improvement with additional compute (a very expensive way to stand still).

The ReAct agents proved especially valuable early in the search, providing a 5.5 percentile point boost at 3 hours by enabling more efficient exploration.

Perhaps most revealing was the finding about overfitting

By implementing consistent evaluation, the researchers discovered that the performance degradation seen in prior work wasn’t due to data memorization at all.

Instead, it stemmed from evaluation noise and metric gaming. Once these sources of instability were controlled, agent performance improved monotonically with additional compute (finally behaving the way everyone had hoped it would in the first place).

Real breakthroughs in action

Beyond the numbers, AIRA2 demonstrated moments of genuine scientific reasoning.

Rather than discarding the approach, the agent inspected the logs, correctly diagnosed under-fitting, scaled up the model parameters, extended training time, and achieved a gold medal score.

Not bad for something that doesn’t need coffee breaks.

Similar breakthroughs occurred on other challenging tasks. On a text completion challenge, AIRA2 decomposed the problem into two learned subtasks, training separate models for detecting missing word positions and filling gaps.

On a fine-grained image classification task with 3,474 classes, it achieved the highest score among all evaluated agents by carefully ensembling multiple vision models with asymmetric loss functions, no small feat, even by human standards.

The path forward for AI-driven research

AIRA2 represents more than incremental progress.

By treating AI research as a distributed systems problem rather than just a reasoning challenge, it demonstrates that the key to scaling AI agents lies in addressing fundamental engineering bottlenecks.

The system’s ability to maintain consistent improvement over 72 hours of compute suggests we’re moving closer to agents that can conduct genuine, sustained scientific investigation, without quietly falling apart halfway through.

The implications extend beyond benchmark performance

As these systems mature, they could accelerate discovery across fields from drug development to materials science.

However, challenges remain.

The researchers acknowledge that distinguishing genuine reasoning from sophisticated pattern matching remains difficult, especially given potential contamination from publicly available solutions in training data.

With careful engineering to address compute efficiency, evaluation reliability, and operator flexibility, we can build systems that don’t just automate routine tasks but engage in the messy, iterative process of scientific discovery.

The gap between human and AI researchers continues to narrow, one bottleneck at a time.

5 lessons we can learn from Sora: Hype vs reality

For a brief moment, Sora seemed like the future of AI video generation. Then, almost as quickly as it appeared, it quietly disappeared.

Sora’s rise and disappearance offer a rare glimpse into the practical realities of developing cutting-edge AI. For AI leaders, engineers, and decision-makers, it provides a real-world view of what it takes to build scalable, commercially viable AI products.

These lessons are essential for anyone hoping to turn AI research into lasting impact (without losing their sanity along the way).

1. Compute costs can limit even the most advanced AI models

Sora pushed the boundaries of multimodal AI, generating high-quality video from simple text prompts. The results were impressive, showing what AI can do when it combines natural language understanding with visual synthesis.

Behind the shiny demos, however, economics told a different story…

Video generation consumes far more computational resources than text or image generation.

Each video requires multiple GPU passes, massive memory bandwidth, and precise rendering pipelines. Running Sora at scale required significant GPU infrastructure, which made operating costs extremely high.

For organizations investing in AI infrastructure, the lesson is clear:

If your AI model’s scalability relies on high compute costs, innovation alone will not guarantee success. Even the fanciest AI can’t survive on wishful thinking.

2. Viral AI products may create lasting value

Sora captured immediate attention as a breakthrough in AI content generation, with early adoption surging thanks to curiosity and experimentation.

Engagement dropped quickly. Novelty does not equal necessity.

While Sora impressed users with creative demos, it struggled to offer repeatable value for daily use. Tools integrated into professional workflows, such as AI copilots, automation platforms, or enterprise AI solutions, provide consistent value.

- Build for retention, not just reach

- Prioritize workflow integration over wow-factor

The most successful AI products balance novelty with practicality, offering value that users return to day after day. Think of it as the difference between a fleeting TikTok trend and a tool you actually rely on at work.

3. Monetization strategies must be clear from day one

Sora also highlighted the challenges of monetizing cutting-edge AI technology. Its positioning in the AI business model landscape was unclear:

- Expensive for mass free usage

- Entertainment-focused for enterprise budgets

- Early for a well-defined pricing strategy

While Sora generated excitement, companies struggled to find a path to revenue. The market rewards AI applications where ROI is measurable, including:

- AI for productivity

- AI for software development

- AI for operational efficiency

These areas are experiencing accelerating enterprise AI adoption. Clear monetization strategies (subscription, usage-based, or enterprise licensing) turn AI innovation into sustainable products. In short: hype gets attention, but cash keeps the lights on.

4. Trust, IP, and governance are central concerns

Like many generative AI systems, Sora raised urgent questions about:

- Copyright and intellectual property

- Deepfake risks and synthetic media misuse

- Ownership of AI-generated content

For companies deploying AI at scale, these issues are critical. Organizations must establish strong governance frameworks, compliance strategies, and ethical guidelines.

5. Focus and resource allocation determine AI winners

Sora demonstrates the importance of focus and strategic resource allocation. OpenAI shifted its resources from Sora toward higher-impact areas, including:

- Enterprise AI tools

- AI coding assistants

- Agent-based systems

In a world of limited compute, talent, and capital, every AI initiative competes for attention and investment. Success is determined by strategic prioritization.

The most effective AI strategy is to focus on initiatives that scale.

This requires leadership teams to make careful choices, balancing short-term excitement with long-term impact. Scaling AI involves building products that deliver sustained value.

Conclusion: From hype to execution

Sora illustrates a broader shift in the AI landscape. We are moving from:

- Experimental innovation to Scalable AI Systems

- Eye-catching demos to Production-Grade AI Applications

- Hype-driven narratives to ROI-Driven Decision-Making

The future of AI rewards teams that combine technical excellence with practical deployment. Successful AI products deliver consistent, measurable value while navigating the constraints of cost, infrastructure, and trust.

Sora shows that while hype opens doors, execution defines winners. Today’s AI professionals must focus on building products that actually work in the real world, and maybe have a little fun along the way…

Thanks for registering

Check your inbox, you’ll receive an email with your link to join.

See you soon.

Fighting financial crime with hybrid AI

I’ve been in the data game long enough to see plenty of AI projects crash and burn.

I started my career building data warehouses for telcos and banks, then moved into machine learning consulting, where I led hundreds of projects across industries. Now I’m leading data analytics and machine learning at Phenom, and I want to share something we recently built that actually works.

Let me be clear about what I mean when I say “Gen AI” here. I’m talking about LLMs and the tools built on top of them. The “old school ML” I’ll reference means those low-complexity supervised models we’ve been using for years, the ones that are fast, cheap, and reliable by nature.

The reality of building AI in fintech

Phenom provides banking solutions for SMEs across Europe, but at our core, we’re a B2B fintech scale-up. Each of these words carries weight.

Being B2B means every single client counts. We can’t mess around with client communications or operations. Everything that touches our clients’ needs to meet a certain standard, no exceptions.

Being a fintech means we love technology, sure, but we’re also bound by regulations. The Financial Crimes Enforcement Network doesn’t care how innovative your solution is if it doesn’t meet compliance standards.

And being a scale-up? That means we can’t afford AI theater. We have some budget for innovation and experimentation, but every investment needs to demonstrate real efficiency gains and positive ROI.

These constraints shaped our entire approach to AI and machine learning at Phenom. We’ve established two fundamental pillars that guide everything we build.

- First, we successfully convinced leadership (all the way up to the board) that while AI is nice, having a solid data foundation and platform is even better. When you’re dealing with regulatory reporting or enabling better tactical and strategic business decisions, that foundation matters more than any flashy AI feature.

- Second, we developed clear ground rules for when to use which technology. When we need stability and structured signals, we reach for traditional machine learning first. When we’re dealing with messy input data like customer reviews or unstructured text, we consider generative AI.

High-risk scenarios involving financial crime, regulations, or customer care always get hybrid solutions with humans in the loop. Low-risk internal use cases? That’s where we let AI shine and can afford the occasional mistake.

For expert advice like this straight to your inbox every other Friday, sign up for Pro+ membership.

You’ll also get access to 300+ hours of exclusive video content, a complimentary Summit ticket, and so much more.

So, what are you waiting for?

Artemis II Astronauts Have ‘Two Microsoft Outlooks’ and Neither Work

In 1969, the three astronauts of the Apollo 10 mission conducted a momentous “dress rehearsal” for putting humans on the lunar surface for the first time. It was a historic, inspiring moment for humanity; Astronaut John Young watched from a command module spacecraft as Thomas Stafford and Gene Cernan broke away and flew a lunar module within 10 miles of the moon’s surface, then reunited to return home to Earth. It’s from this mission that we have one of the most powerful transcripts in NASA history:

“Who did what?” Young asked. “Where did that come from?” Cernan added.

“Give me a napkin quick,” Stafford said. “There’s a turd floating through the air.”

The provenance of the poop remains one of the great mysteries of spaceflight. Today, in the early Earth-morning hours of the Artemis II astronauts’ history-mirroring mission around the moon, we have another: Why is Microsoft Outlook not working in space?

On April 1, four astronauts from the U.S. and Canada embarked on a 10-day flight to loop around the moon. Spotted by VGBees podcast host Niki Grayson on the NASA livestream of live views from the , around 2 a.m. ET, Kennedy Space Center mission control acknowledges an issue with a process control system and offers to remote in—yes, like how your office IT guy would pause his CoD campaign to log into Okta for you because you used the wrong password too many times.

One of the astronauts, Reid Wiseman, says that’s chill, but while they’re in there: “I also see that I have two Microsoft Outlooks, and neither one of those are working.”

right now the astronauts are calling houston because the computer on the spaceship is running two instances of microsoft outlook and they can’t figure out why. nasa is about to remote into the computer

Astronauts are trained for decades in some of the most physically and mentally grueling environments of any career. They’re some of the smartest people on the planet, and they have to be, before we strap them to 3.2 million pounds of jet fuel and make them do complex experiments and high-stakes decisions for days on end. And yet, once they get up there, fucking Outlook is borked.

I scanned through the next several minutes after this moment and didn’t hear them address the duplicate Outlooks again. So, I emailed the Artemis II communications team, who is definitely not busy today I’m sure, and asked: Can the astronauts check their email yet?

I’ll update if I hear back.

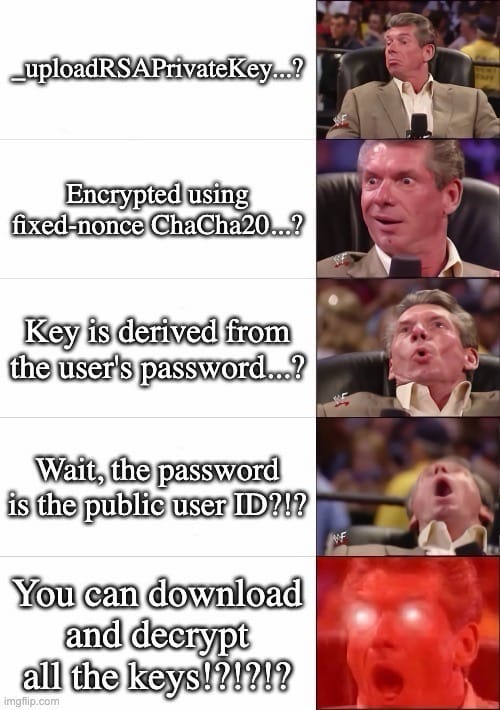

A Secure Chat App’s Encryption Is So Bad It Is ‘Meaningless’

TeleGuard, an app that markets itself as a secure, end-to-end encrypted messaging platform which has been downloaded more than a million times, implements its encryption so poorly that an attacker can trivially access a user’s private key and decrypt their messages, multiple security researchers told 404 Media. TeleGuard also uploads users’ private keys to a company server, meaning TeleGuard itself could decrypt its users’ messages, and the key can also at least partially be derived from simply intercepting a user’s traffic, the researchers found.

The news highlights something of the wild west of encrypted messaging apps, where not all are created equal.

“No storage of data. Highly encrypted. Swiss made,” the website for TeleGuard reads. The site also says, “The chats as well as voice and video calls are end-to-end encrypted.”

In March an anonymous security researcher, who didn’t provide their name, told 404 Media about a series of vulnerabilities in TeleGuard. They included the fact the TeleGuard app uploads users’ private encryption keys to the company’s server upon account registration.

Often when implementing encrypted messages, apps will assign users a public and private key. The public key is what other users use to encrypt messages for them, and the private key is what a user uses to decrypt messages meant for them. If this key falls into someone else’s hands, they may be able to read a users’ messages.

In true end-to-end encryption, this encryption happens on a user’s phone, and the key should never leave that device. With TeleGuard, the app is transmitting that highly sensitive key to the company’s servers. Technically, the app uploads an encrypted version of the private key, but it also transmits other information that allows the server to decrypt it, the researcher explained. That includes the user’s unique ID, which is also uploaded along with the key; a hardcoded salt (which in cryptography is supposed to be a random string of characters, but in this case is constant); and a hardcoded nonce (which is also supposed to be random for every communication to stop certain attacks, but is constant with TeleGuard). “The server can decrypt every user’s private key. It has everything,” the researcher wrote in their findings shared with 404 Media.

That series of design decisions means TeleGuard, the company, receives users’ private keys. But the keys are also accessible to other attackers. The researcher found it’s possible to retrieve a specific user’s private key by simply plugging their user ID into TeleGuard’s API. Many people share their user ID publicly so they can be contacted, opening them up to this attack.

404 Media asked Dan Guido, CEO and co-founder of cybersecurity firm Trail of Bits, whether his team was able to verify the findings. Guido said the company found much the same thing, and added the app’s encryption “is meaningless,” because of the app uploading the private keys and the server’s ability to decrypt them.

Trail of Bits then found multiple other security issues with TeleGuard, including being able to at least partially extract users’ private keys from simply intercepting their traffic. Trail of Bits said it then successfully decrypted one of the shoddily encrypted private keys from that capture.

Guido sent 404 Media this meme:

The researcher who initially reached out also said TeleGuard’s metadata—when someone sent a message, and to whom—is in plaintext, meaning that could be exposed to attackers too.

TeleGuard launched in around 2021, according to archives of the app’s page on the Wayback Machine. It is made by Swisscows, a company that also makes what it describes as an anonymous search engine, a VPN, and an email service. In a promotional video, TeleGuard claims to have “one of the strongest encryptions available.”

Neither TeleGuard nor Swisscows responded to multiple requests for comment, nor gave any indication or timeline of when they might fix the issues.

TeleGuard has been recommended to cam models as a way to communicate, according to a post on a subreddit for models. The app has also repeatedly been linked to child abusers, with one local media outlet reporting TeleGuard is “notorious” among prosecutors for child sexual abuse material. The FBI previously obtained data about a TeleGuard user through push notifications sent to their phone. A foreign law enforcement agency had TeleGuard hand over push notification-related data, which the FBI then took to Google to obtain email addresses linked to that alleged pedophile, The Washington Post reported.

Scientists Create Plant That Produces Ayahuasca, Shrooms, and Toad Psychedelics All At Once

Scientists have engineered tobacco plants to produce five psychedelic compounds that are normally found in a wide range of natural sources, including psilocybin mushrooms, ayahuasca, and toads, according to a study published on Wednesday in Science Advances.

The breakthrough could lead to more sustainable and scalable production of these compounds by using model plants to biosynthesize common psychedelic “tryptamines,” such as psilocybin from hallucinogenic mushrooms, N,N-Dimethyltryptamine (DMT) from plants, and psychoactive compounds secreted by the Sonoran Desert toad.

Eventually, this research could pave the way toward—as one example—tomato plants that contain microdoses of psychedelic cocktails in each fruit. However, the study’s authors emphasized that these modified plants would need to be limited to medical use in clinical settings, and should not be accessible to consumers for recreation.

“We are interested in this, not because of the recreational effects, but because of the medicinal potential,” said Paula Berman, a postdoctoral researcher at the Weizmann Institute of Science who co-led the study, in a call with 404 Media.

“This combination of five psychedelics—I don’t think anyone has ever tried something like it,” added senior author Asaph Aharoni, principal investigator and head of the department of plant and environmental sciences at the Weizmann Institute of Science, in the same call.

Tryptamines are a subclass of metabolites—compounds produced by metabolic processes in organisms—which have wide-ranging potential as treatments for conditions such as depression, anxiety, mood disorders, and post-traumatic stress disorder.

Indigenous cultures in many regions have cultivated tryptamines for thousands of years for ritual, spiritual, and therapeutic purposes. These compounds are now in high demand as both recreational drugs and medicinal treatments, though legal regulations governing their use vary widely around the world.

Due to their growing popularity, many of the source organisms that produce these compounds are facing significant ecological stresses in the wild; for example, the Sonoran Desert toad population is rapidly declining due to poaching and over-harvesting. Scientists have produced synthetic versions of some tryptamines, but those methods often involve complicated processing steps and hazardous reactants that generate chemical waste.

To help alleviate these problems, Berman, Aharoni, and their colleagues reconstructed the biosynthetic pathways in five tryptamines: Psilocin and psilocybin, both found in hallucinogenic mushrooms; DMT, which is the psychoactive part of ayahuasca; and the psychedelic compounds bufotenin and 5-methoxy-DMT secreted by the Sonoran Desert toad.

The team then inserted the active genes of these pathways into the leaves of a tobacco plant, creating a botanical platform to produce all five psychedelics. By design, the modified plants are not able to pass these genes onto future generations, as this study is intended to offer a “proof of concept,” Berman said.

“In one leaf, we get five different psychedelics from three different kingdoms,” said Aharoni. “But since it is not inherited, it will stay in the leaves and will not go through to seeds, flowering, pollination, and to the next generation.”

“One reason that we did that is we are still not sure if we want to make plants where everybody can grab seeds from us and grow a plant with five different compounds” that might be deadly, he added. “We have to make sure that it stays in research.”

With that caveat, the team hopes that their work could lead to a method of tryptamine biosynthesis that could help meet the global demand for these compounds. In addition to sidestepping the disadvantages of synthetic versions, this technique could also remove stressors on wild populations. The goal is to ensure that wild tryptamine sources can be reserved for use in traditional Indigenous practices.

The researchers are also interested in clarifying the evolutionary purpose of psychedelic compounds for the plants that naturally produce them, which remains mysterious in many cases.

“We understand the importance of the plants, the fungi, and the Sonoran Desert toad, and every species that we discuss in the paper,” Berman said. “One of our motivations was to really understand better what these species do, so that we can mimic what they do.”

“Over-harvesting endangers the natural availability of these species for native peoples and Indigenous groups,” she concluded. “We have so much respect for the knowledge that they provide us, and we just want to add to this knowledge and to be able to produce these in a more sustainable way.”